The Synthesizer

Introduction

To synthesize is to combine separate elements to produce something new. The components of traditional instruments—for instance the strings, fingerboard, bridge, and body of a string instrument—are a set of elements whose construction and musical function are predetermined by the instrument manufacturer: the strings vibrate over a fingerboard and those vibrations are amplified by a bridge and the body of the instrument.

By contrast, synthesizers are a collection of elements—oscillators, mixers, filters, and amplifiers—that the performer (synthesist) configures and programs at will to produce practically any desired sound or musical effect. Basically, oscillators generate waveforms, mixers combine waveforms, filters shape sound, amplifiers make waveforms audible, and control devices such as wheels, sliders, joysticks, electronic pattern generators, or computer interfaces allow performers to manipulate the resulting sounds in musically expressive ways.

We may, therefore, define the synthesizer as a musical instrument that produces sound by combining, shaping, and processing electronically generated sound sources. Its main characteristics include electricity, the ability to combine and modify basic sonic elements, and sophisticated degrees of control and programmability.

Throughout history, people have experimented with many new types of instruments. Some of them lasted, many didn't. In the grand scheme of things, the synthesizer is a very new instrument.

Let's look at some criteria by which all instruments are measured:

- Versatility: An instrument must be versatile enough to be used under many different circumstances—musically speaking.

- Identity: An instrument should have a clear sound persona or sound ideal, that is, the way we have come to expect that instrument to sound.

- Development: In order to be musically expressive, an instrument must be fully developed in the way it is built and also in terms of instrumental technique—how it is played.

How does the synthesizer meet each of these criteria?

Developed Instruments Versus the Synthesizer

The following table illustrates the main differences between a highly developed musical instrument—for instance, the piano or violin—and the synthesizer.

As you can see, there are big differences between a highly developed instrument and one that isn't. Highly developed instruments fulfill the conditions outlined above. The synthesizer does not meet any of them, but that is probably its greatest strength.

Synthesizers are capable of producing an almost infinite array of sounds offering musicians vastly expanded expressive possibilities and musical scope. Because synthesizers come in practically every performance medium—keyboard, string, winds, or percussion interfaces—performers are not limited to using a specific one to produce a given sound. For instance, woodwind synths allow musicians to use familiar fingering and techniques to play an unlimited range of new sounds. A woodwind synth can be played like a saxophone, for example, but sound like a trombone, piano, electric guitar, bass, or literally any sound that lets the performer achieve the desired musical effect. Listen to the following pieces by David Antony Clark, and notice the wide range of timbres that the synthesizer can produce.

Listen to the following pieces by David Antony Clark, and notice the wide range of timbres that the synthesizer can produce.

Composer: David Antony Clark

-

"A Land Before Eden"

Composer: David Antony Clark

-

"Rainmakers"

Synthesizer Classification

Synthesizers, or "synths" for short, can be classified in three general categories:

- Analog synthesizers

- Digital synthesizers

- Samplers

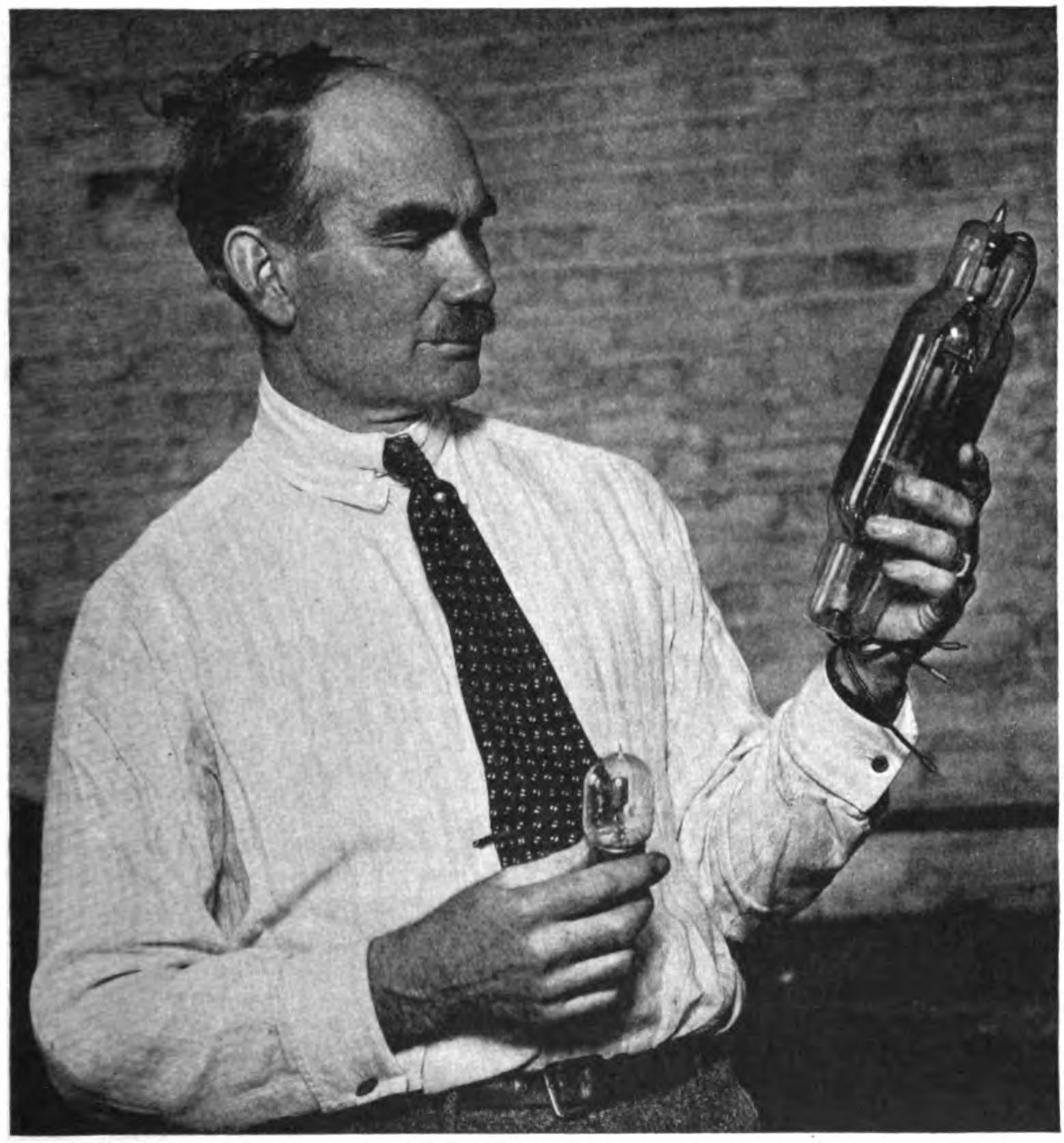

However, before going into a brief description of those categories, it would be helpful to clarify that strictly speaking, electric instruments use electricity and electronic instruments use vacuum tubes or semiconductors to produce sound. Semiconductors, as part of integrated circuits, are the heart of modern electronics—computers, TVs, telephones, and of course, music synthesizers. They are ideal mediums for the control of electrical current because, as their name suggest, they are not pure conductors or pure insulators of electricity, so they allow very flexible control of electricity. The most common material in semiconductor devices is silicon.

Analog Synthsesizers

Analog synthesizers use analog circuits and analog signals to generate sound electronically. The voltage controlled oscillator is the heart of an analog synthesizer. Early 1920s and 30s analog synthesizers used vacuum tube and electro-mechanical technologies to generate sound.

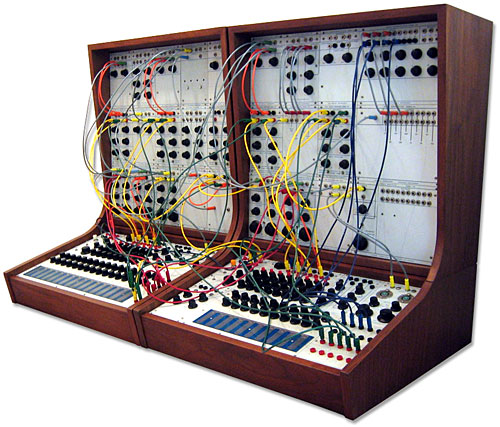

Later, in the mid-1960s, analog synthesizers such as the Moog synth used voltage-controlled oscillators, filters, and amplifiers, and independent sound-producing modules connected by patch cables.

Digital Synthesizers

A digital synthesizer combines digital signal processing (DSP) techniques and built-in sound-synthesis algorithms (that is, mathematical representation of sounds) to make musical sounds. Same as CD players, digital synthesizers work by producing a stream of numbers at a steady sample rate. Those numbers are then converted to analog form and sent to speakers or headphones to produce sound.

Samplers

A sampler is similar to a synthesizer in some respects, but instead of generating sounds, it uses recordings (or "samples") of sounds that are pre-loaded or recorded by the user. These sounds can be played back by means of the sampler program itself, a keyboard, sequencer, or another triggering device.

Electronic Generation of Sound

Synthesizers generate sounds electronically. As you know, to generate sound you need movement—something has to vibrate. In the case of electronic instruments, this movement is the oscillation of electric current as it changes polarity from positive to negative, that is, it oscillates back and forth from positive to negative charges. The parts of the synthesizer that generate the sound electronically are called the sound engine. Sound engines can be monophonic, which produce one sound at a time, or polyphonic, which are capable of producing two or more simultaneous sounds. One notable early polyphonic synthesizer was the Sequential Circuits Prophet-5, which was released in 1978 and had five-voice polyphony. Six-voice polyphony was standard by the middle 1980s. With the advent of digital synthesizers, 16-voice polyphony became standard by the late 1980s. 64-voice polyphony was common by the middle 1990s and 128-note polyphony arrived shortly after.

Movement generates waves, and the timbre and volume of the sound you hear will depend on the shape of the wave, which is also called a waveform.

It is difficult to explain this process without getting too technical, but let's give it a try. There are two basic ways of generating sounds electronically: by synthesis and by sampling. We are concerned mainly with synthesis, which refers to creating sounds electronically from previously-generated waveforms.

Waveforms are generated using oscillators, filters, and amplifiers. Think of it as a chain of events. The first link in the chain is, as with any other musical instrument, a sound source. In the synthesizer, this sound source is an oscillator. The next links in the chain provide ways of manipulating the sound with different types of audio filters that augment, pass, or attenuate certain frequency ranges. The numbers (digits) representing the sound need to be converted to analog output and made audible, so the next two and final links in the process use a Digital to Analog Converter (DAC) to convert series of numbers into smooth varying voltage that is finally sent to a loudspeaker.

Synthesizer Performance

Synthesizer performance involves programming sounds (sound objects) either ahead of the performance or in real-time, and the collection of gestures and techniques needed to manipulate various instrument configurations and controllers with musically idiomatic results.

A synthesizer performer, sometimes referred to as synthesist, has several elements at his or her disposal, not only for the reproduction of sound, but for the modification or creation of totally new sounds. The three main elements are: Pitch selector, controllers, and programming controls.

Pitch Selector

The synthesist selects pitches using a pitch selector, which is nearly always modelled after a traditional instrument: keyboard, wind, percussion mallets or drum sets, or strings (e.g. violin), etc. The big difference is that when the synthesists presses a key on the synthesizer keyboard he/she can decide what function that key should perform aside from generating a particular pitch. Depending on pre-configured (programmed) parameters, or on real time input, that key can send signals that trigger different sounds or sound effects in other interconnected devices.

Controllers

Further real-time shaping of the sound is accomplished using ancillary controllers. These controllers can be continuous or discrete. Continuous controllers allow performers to use physical continuous motions to manipulate the sound. They include the wheel, joystick, slider, ribbon, breath or foot controller, and pressure. The two most common continuous controllers you will find in a synth are two wheels or a bi-directional joystick, dedicated to pitch bend and modulation. Discrete controllers work as two-state switches that send on-and-off values. These controllers can be foot switches (pedals or buttons) or hand-operated switches (buttons and switches).

Programming Controls

Synthesizer buttons, knobs, dials, and sliders allow the synthesist to access two kinds of programming controls to program the synthesizer:

- Editing controls, which change program parameters. A program is a set of stored values that describe sound parameters and data routing within or between devices. These are selected by program number on a display screen on the synth. A program is also referred to as a patch—as in the verb “to patch—a throwback to the days when physical chords where used to patch or connect together different sound modules in a synth. A cluster of programs is called a bank.

-

- Mode selection controls, which allow the performer to change between play, edit, and store modes. As their names imply, play mode is used when the synth is configured for real-time performance; edit mode puts the synth in an edit or programming state; and store mode is used to store and retrieve data.

Accomplished synthesists are no different than any other accomplished instrumentalist—pianist, violinist, guitarist, or trombonist to name a few—in the sense that they should posses very specific and highly developed technical skills and musicianship. These include the ability to:

- Sight read melodic lines, chords, and chord symbols.

- Interpret synthesizer-specific expression marks—pitch bend and modulation, as well as standard articulation and expression indications for traditional wind, brass, string, keyboard, or percussion acoustic instruments.

- Read fluently in the four most common clefs—treble, bass, alto, and tenor—as well as percussion notation.

- Improvise melodies and accompaniments in a wide variety of musical styles.

- Access large collections of synthesizer programs and samples, and modify them in real time depending on specific performance situations.

- Perform in many different musical styles in an idiomatic way, that is, following the original sound, performance characteristics, and nuances of original instruments in the correct musical context.

- Play with a well-developed technique on a variety of configurations: traditional horizontal keyboard, strap-on, standard ancillary controllers, and non-keyboard controllers.

- Work fluently with MIDI (Musical Instrument Digital Interface) protocol, computer hardware and software, and audio devices as needed for real-time stage or studio work.

None of the capabilities described above would be possible without MIDI. The MIDI Association—a free non-profit, member community of people that create music and art with MIDI—defines MIDI as "an industry standard music technology protocol that connects products from many different companies including digital musical instruments, computers, tablets and smartphones." Their website goes on to explain that "MIDI is used everyday around the world by musicians, DJs, producers, educators, artists, and hobbyists to create, perform, learn, and share music and artistic works. Nearly every hit album, film score, TV show and theatrical production uses MIDI to connect and create."

How did MIDI originate? As we shall see below, from the late 1940s on, there was a twenty-year period of compositional emphasis when the synthesizer was mainly used in conjunction with tape manipulation and audio processing of recordings of both acoustic sounds (musique concrete—concrete music) and electronic sources (elektronische musik—electronic music). Shortly thereafter, the two currents merged to produce what is simply known as "electronic music." Huge instruments like the RCA Mark II Electronic Music Synthesizer installed at the Columbia-Princeton Electronic Music Center, were mainly used for sound manipulation and recording rather than live performance.

However, the development of the transistor and other semiconductor devices starting in the late 1940s, made it possible to build small, modular, portable voltage-controlled synthesizers that used voltage to control music parameters. Live avant-garde electronic ensembles started to appear in the late 1950s and throughout the 1960s.

The 60s saw new ways of producing sound though synthesis and a gradual but constant change from analog to digital synthesizer technology. Commercially viable monophonic, and later polyphonic synths proliferated during the 70s. The first synthesizer festival was held in 1974. Synthesizers, like the computers they housed, became programmable. Analog to Digital converters (DAC) made digital recording—sampling—possible. Acoustical sounds could now be converted and stored as series of digits. New commercial machines, particularly Yamaha’s DX7 synthesizer released in 1983, revolutionized the market. Synthesizers became multi-timbral, that is, capable of producing more than one sound or tone color at a time (multi=many, timbral=timbres). The problem was that synthesizers produced by different manufacturers didn’t communicate with each other. Each company followed its own set of protocols for conveying digital information, so machines manufactured by Yamaha didn’t "talk" to those by Korg, Kurzweil, or Kawai to name a few. In comes MIDI.

Proposed in 1981 by Sequential Circuits and refined by an industry manufacturer consortium during the following year, the MIDI protocol allows communication between electronic music devices of any kind. Two of them, one by Sequential and another by Roland, were successfully connected at the January 1983 meeting of the National Association of Music Merchants (NAMM) in California.

A Very Short Chronology of the Electronic Sound Generation

The history of electronic music instruments is a fascinating and colorful one, full of dead ends, missed opportunities, and imaginative geniuses that, as in any other field of human endeavor, pursued dreams and ideas sometimes in an intuitive way, and others with full scientific rigor. By necessity, the following is a very condensed outline that includes some of the most prominent figures and instruments in this wonderful and often surprising story.

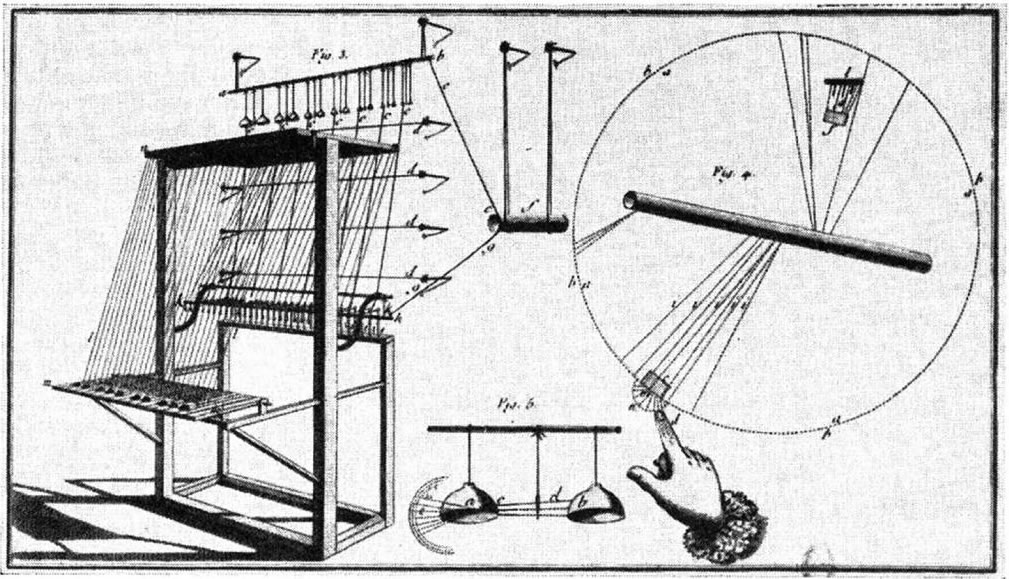

The history of the synthesizer goes all the way back to 1759 Paris, where the French Jesuit priest Jean-Baptiste Delaborde invented the clavecine eléctrique (electric harpsichord)—the earliest surviving electric-powered musical instrument. The press and the public admired the innovative machine, but it wasn’t developed further. The model Delaborde himself built survives and is kept at the Bibliothèque Nationale de France in Paris.

Composer: Olivier Messiaen

-

"Turangalîla-symphonie: V. Joie du sang des étoiles"

Composer: Olivier Messiaen

-

"Feuillet inedit No. 4 (Unpublished page No. 4)"

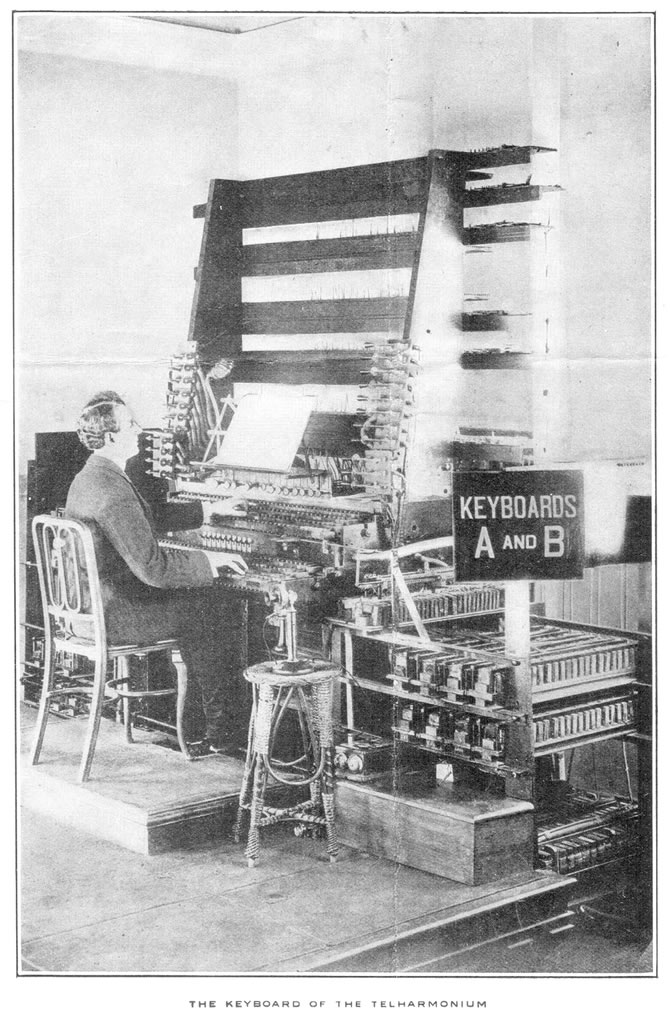

Other significant developments around this time include the Coupleux-Givelet synthesizer (Edouard Coupleux and Joseph Givelet, 1929), which was the first extensively programmable synthesizer that allowed for dynamic variation, modulation, envelope shaping—how sounds are attacked, sustained, and released—and filtering. Couplex and Givelet were probably the first to use the term synthesizer.

The success of the Couplex-Givelet was overshadowed by the commercial success of Hammond organ (1935) and the Novachord (1939) by Laurens Hammond of Evanston, Illinois.

In 1937, Harald Bode produced the Warbo Formant-Orgel, an early polyphonic poly-timbral keyboard, and the next year, he unveiled the Melodium, a sophisticated monophonic touch-sensitive keyboard. Ten years later (1947) Bode would produce the Melochord, an early electronic split-keyboard instrument.

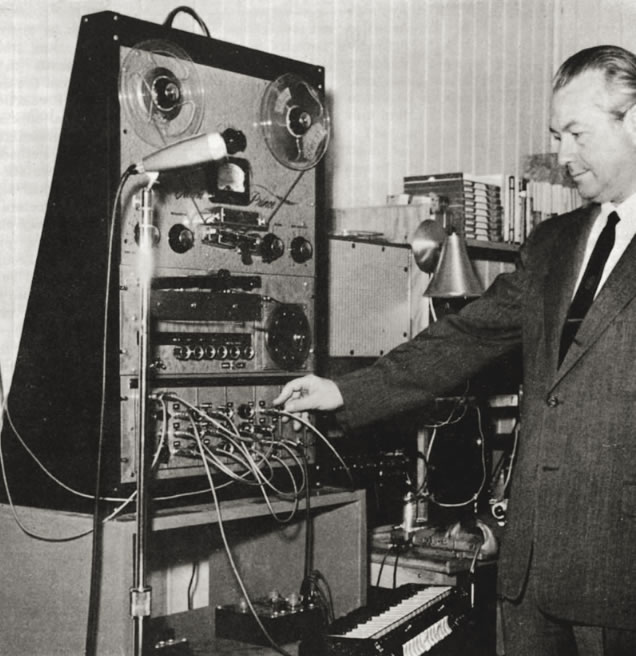

After the period from roughly 1940 to 1960, that saw the developments outlined earlier in this lesson, came a major industry milestone with the first commercially successful voltage-controlled modular synthesizer developed by Robert Moog in 1964 at the Columbia-Princeton Electronic Music Center, now the Computer Music Center. In the Moog synthesizer the different sound modules are connected together using patch chords and switches to create a patch. The voltages from the modules could function as audio signals, control voltages to shape sound parameters, or logic conditions to create program changes.

Around the same time that Moog was building synthesizers in the East coast of the US, Don Buchla was busy creating his electronic music equipment company, Buchla and Associates (1962) in Berkeley, California. Under a grant from the Rockefeller Foundation Buchla completed the Buchla Series 100, his first modular synthesizer, in 1963.

The application of computer technology to music in the mid 1960s meant that new music languages were needed to represent and control musical information. The first of these, Music 1 and Music 2, were developed by Max Matthews, a telecommunications engineer at Bell Telephone Laboratories' Acoustic and Behavioral Research Department in New Jersey.

Beginning in the 1970s, the advent of the microprocessor and integrated circuits technologies allowed synthesizers themselves to become digital rather than analog. A microprocessor is the Central Processing Unit (CPU) of a computer. This meant that the function of hardware sound modules could now be duplicated by software. Furthermore, the stability, reliability, portability, and control of sound parameters of synths improved exponentially. Synthesizers, like computers, soon became programmable: a group of settings representing any given sound or sounds could now be digitally stored, manipulated, and recalled in computer memory as needed. Live performance on synthesizers became increasingly practical from then on.

With sampling and digital recording, made possible by the Analog-to-Digital Converter (ADC) we already mentioned—which by the way, was also pioneered by Max Matthews—new sounds were available in the digital world. Samplers entered the game.

The Fairlight CMI (Computer Music Instrument) developed in Australia by Peter Vogel and Kim Ryrie, and based on a dual-6800 microprocessor computer designed by Tony Furse rose to international prominence in the early 1980s and competed in the market with the Synclavier from New England Digital Corporation of Vermont, USA.

The 1980s and 90s saw a veritable explosion of different devices from companies such as Roland, Korg, Ensoniq, E-mu, Kurzweil, Yamaha, and Kawai, and the birth of MIDI. As revolutionary as they were, those first systems were still very costly, ranging from $25,000 to $200.000 US dollars.

In the 2000s, however, decreasing prices and exponential advancements in computer technology have allowed music sequencer software such as Pro Tools, Logic Audio, and many others to be developed and offered at affordable prices. These sophisticated software packages, coupled with increasingly affordable computer prices and Cloud music storage and streaming offer almost limitless creative possibilities to the contemporary musician, in whatever field of specialization they may happen to concentrate.